The idea of technology reading human thoughts has moved from science fiction into real-world experiments. From wearable devices to advanced sensors, researchers are exploring how machines can interpret brain signals and human intent. To understand how far this technology has come, a small public test was conducted using available tools that track brain activity, facial expressions, and behavioral patterns. The results were both fascinating and limited. While machines can detect certain patterns and predict actions, they are far from truly understanding thoughts. This experiment revealed where technology excels, where it struggles, and what it could mean for everyday life in the near future.

Brain Signals Can Be Detected

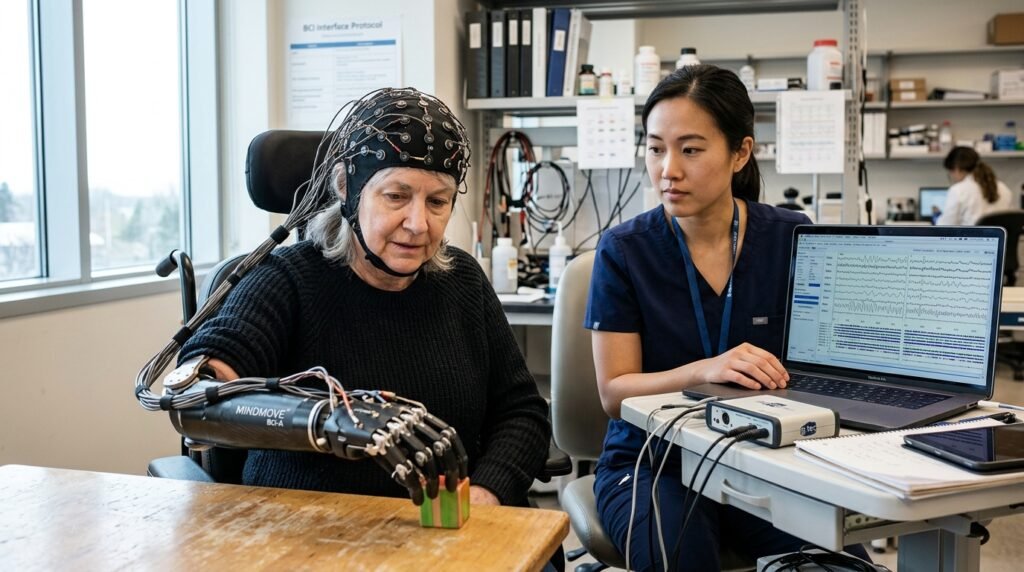

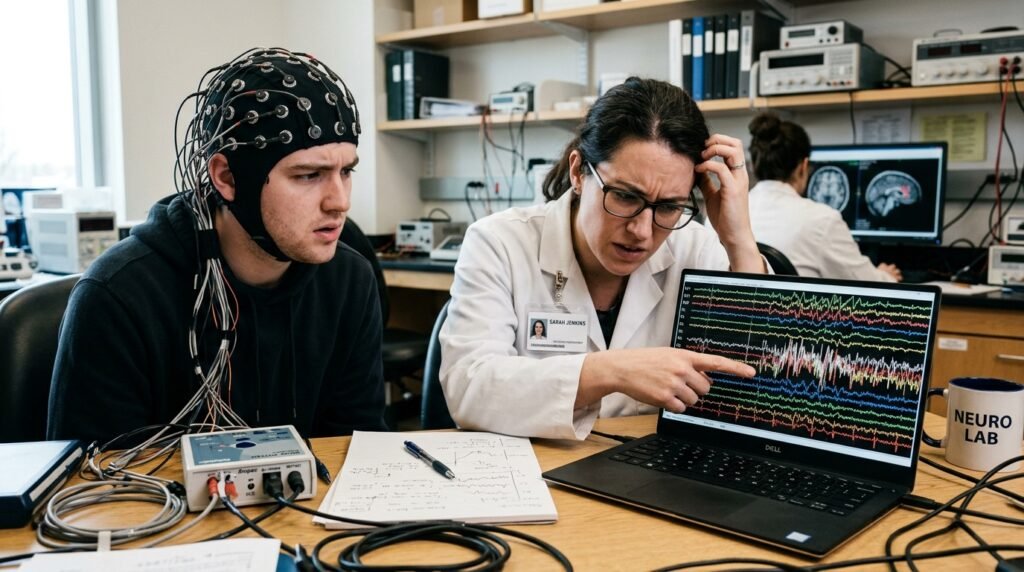

Devices using EEG sensors can capture electrical activity from the brain. During the test, participants wore headsets that recorded signals, showing that technology can identify general mental states like focus, relaxation, and stress.

Thoughts Are Not Directly Read

The system could not read exact thoughts or words. Instead, it interpreted patterns. This means technology does not “hear” your mind but makes educated guesses based on signals and learned data.

Facial Expressions Add Context

Cameras tracking facial movements helped improve predictions. Subtle changes in expressions provided clues about emotions, making the system more accurate when combined with brain signal data during the public test.

Accuracy Depends on Training

The devices performed better when trained on specific individuals. Personalized calibration improved results, showing that mind-reading technology currently works best when it learns patterns unique to one person over time.

Distractions Affect Results

Background noise and movement reduced accuracy. In a public setting, distractions made it harder for the system to maintain consistent readings, highlighting the challenge of using such technology outside controlled environments.

Emotional States Are Easier to Detect

The system was more successful at identifying emotions like excitement or calmness than specific thoughts. Emotional recognition appears to be a more achievable goal for current technology than detailed cognitive interpretation.

Predictions Improve with Data

As more data was collected during the test, the system became slightly better at predicting user responses. This shows that continuous learning plays a key role in improving performance over time.

Privacy Concerns Are Significant

Participants expressed concern about how their brain data could be used. Even limited interpretation raised questions about consent, storage, and misuse, making privacy one of the biggest challenges for this technology.

Applications Are Emerging Slowly

Current uses include medical research, assistive devices, and gaming. While the technology is promising, it is still in early stages and not ready for widespread everyday applications.

Misinterpretation Is Common

The system occasionally made incorrect predictions. For example, similar brain patterns sometimes led to different interpretations, showing that reliability is still a major limitation in real-world scenarios.